If you’ve arrived this at this article by picking up ‘machine learning’ (ML) as the context, you’ve made a great choice. A healthy supply chain heavily relies on the balance between demand and supply. While firms do their best to minimize this gap, there are umpteen factors that disrupt this balance and renders the demand planning exercise futile. While there are off the shelf products available to help you with demand forecasting, they do a sloppy job at running forecasts for products that sell in high volume and experience heavy volatility.

Fortunately for demand planners, ML can now help further improve the forecast from 40% of actual to 70% of actual. There’s a thumb rule that suggests that one can reduce your planned inventory by 2.5% with 1% improvement in inventory forecast. With above guidance, ML clearly has the potential to help demand planners reduce planned inventory and service levels by great proportions. It can bring to life availability-to-promise and match demand with the production plans.

The demand planner’s problem statement

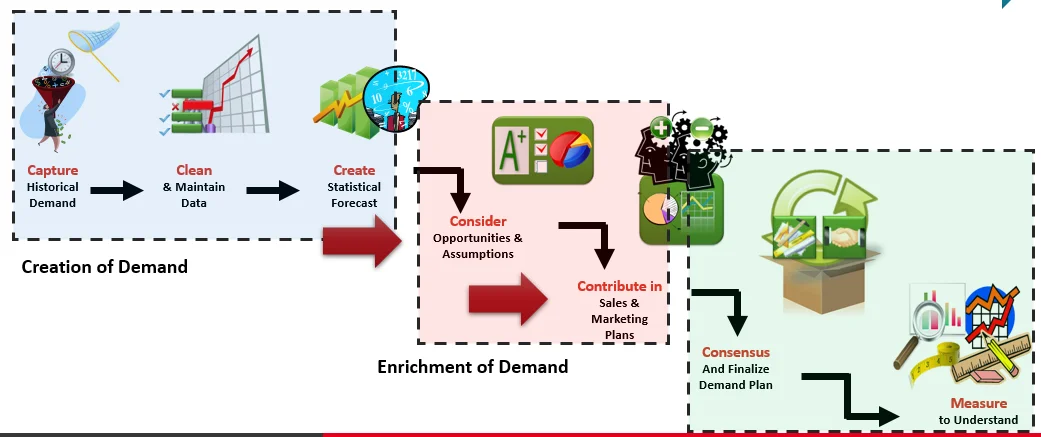

Before we get into how ML plays a role, let’s understand what goes on in the life of a demand planner while running forecast for an SKU. For any demand planner looking to build forecast, there are two variables to classify SKUs – (a) sales volume (b) demand volatility. The combination of these variables defines what kind of models should one use for accurately predicting the forecast.

For a low volatility SKU, statistical models like moving averages and time series with added regressors will provide a high degree of forecast accuracy. Conversely, when we talk of a high sales volume/high demand volatility SKU, our traditional forecasting algorithms fail to provide an accurate forecast.

Failure to accurately predict demand forecast will lead to higher inventory costs, lost sales, lower customer satisfaction levels, reduced margins and lot of other similar repercussions. So, why is it that the current demand planning forecasting models fail to address the problem effectively? Let’s do a deep dive into that now.

The fall of statistical demand planning models

Why do traditional forecasting models fail for high sales volume/high volatility? I’ve worked with several demand planners and I’ve seen two reasons why this happens – see below

- The forecast algorithms used by demand planners work on best fit model selection which doesn’t work well for the high volume/volatility SKUs

- These algorithms don’t have external variables like PMI index, inflation, social media sentiment, competitor pricing, weather forecast, demand sensing, to name a few factored into their logic.

The traditional POS data-based demand planning approach doesn’t work well when the correlation involves external variables like the ones listed above. There is clearly a need to radically improve the traditional ways of generating demand forecast.

Why machine learning rarely fails?

The after-effects of a weak supplier risk assessment go beyond commercial repercussions. Here are the top five risks that organizations get exposed to if they have an ineffective supplier risk management:

- Love for volatility (depth of volatility): ML led models act as black hole to any variables that might reduce the forecast accuracy. A lousy co-brand performance OR that great brand ambassador – it all feeds into the forecast.

- Intelligent sensing (breadth of volatility): ML led models recognize both linear and the non-linear dependencies. It can identify complex patterns, trends and relationship between various variables that are not possible with traditional forecasting models.

- Mimics human’s evolution: just as humans get wiser with experience and ageing, ML models get better at prediction as they work on more and more data sets.